The First 90 Days: How Japanese Enterprises Successfully Launch Vietnam Offshore Projects

You have signed the contract. The SOW is agreed. The team in Ha Noi City is ready. Now comes the part that nobody warned you about.

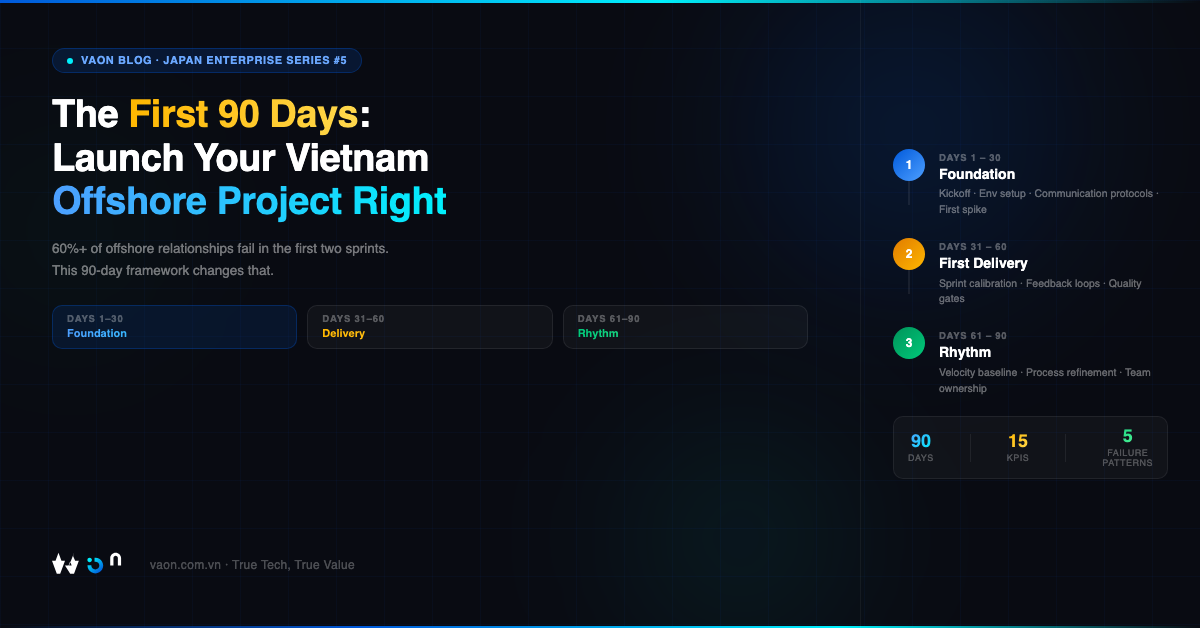

The first 90 days of an offshore engagement are statistically the highest-risk period of the entire relationship. According to surveys of IT managers across Japan and Southeast Asia, more than 60% of offshore relationships that ultimately fail show clear warning signs within the first two sprint cycles. Not because the vendor lacked technical skill. Not because the hourly rate was wrong. But because the foundation was never properly built.

This article is a practical guide for Japanese CTOs and IT managers who have just selected — or are about to select — a Vietnam-based development partner. It will walk you through a proven 90-day framework, the most common failure patterns, and the specific KPIs you should be tracking at each phase. The goal is not to make offshore development sound easy. It is to give you an honest map of the territory so that you can navigate it successfully.

🔳 Phase 1: Days 1-30 — Foundation

The single most important thing you can do in the first month is to treat it as an infrastructure investment, not a sprint. Many Japanese enterprises make the mistake of expecting code output in week one. The team that ships code before the foundation is solid will cost you far more in rework and frustration than one that spends the first two weeks configuring environments, aligning on definitions, and building communication scaffolding.

Here is what a rigorous Day 1-30 looks like.

・Kickoff: More Than a Ceremony

The kickoff meeting is not a formality. It is the moment where the operational contract — separate from the legal one — is established between your team and theirs. This meeting should happen in a structured, two-part format.

Part one is for leadership: both CTOs or IT managers meet to align on business goals, not technical requirements. What does success look like at 90 days? At 6 months? What are the non-negotiable quality thresholds? What are the risks both sides are carrying? This conversation should happen before any engineer joins the call.

Part two brings in the full team on both sides for a technical alignment session. Repositories are introduced. Architecture decisions are documented. Sprint cadence, definition of done, escalation paths, and communication channels are established explicitly. Do not assume that "agile" means the same thing to a developer in Ha Noi City as it does to your team in Tokyo. Make the definitions concrete.

At VAON, for example, every new engagement begins with what we call a "契約書の外の合意" — the agreement beyond the contract. This is a living document that captures working norms, escalation protocols, and communication expectations that a legal SOW never covers. It takes two hours to create and saves hundreds of hours of misalignment later.

・Environment Setup: The Hidden Time Sink

Repository access, CI/CD pipeline configuration, VPN setup, staging environment provisioning, test account credentials, third-party API access — each of these takes time, and each has dependencies. In enterprises with mature IT security policies (which describes most Japanese companies), provisioning access for external vendors involves multiple approvals and handoffs.

Build a dependency map on Day 1. Identify every system the offshore team needs access to. Assign an internal owner for each access item. Set a target completion date of Day 10 at the absolute latest. Any access item still pending by Day 14 is a project risk that needs escalation.

The most common failure in this phase is not technical. It is administrative. The offshore team sits idle for two weeks waiting for repository access, while the Japanese side assumes development has begun. This misalignment creates the first erosion of trust.

・Communication Protocols: Explicit Beats Assumed

With a timezone difference of UTC+7 versus JST (UTC+9), your Vietnam team is two hours behind Tokyo. This is actually one of the advantages of Vietnam over other offshore destinations — there is sufficient overlap for real-time collaboration during Japanese afternoon hours.

・Establish the following explicitly, in writing, shared in a document both teams can reference:

1. Primary async channel (e.g., Slack workspace, Microsoft Teams) and expected response time (e.g., within 2 business hours during overlap window, next morning for messages sent after overlap)

2. Daily or twice-weekly synchronous check-ins — 30 minutes maximum, structured agenda, written summary afterward

3. Escalation path: who contacts whom, through which channel, within what timeframe, for which category of issue

4. Language of record: all technical decisions documented in writing (English or Japanese — decide this explicitly)

5. Video call etiquette: cameras on for all team calls, not just status updates

If your partner's team includes JLPT N1 or N2 certified engineers who can communicate directly in Japanese, use that capability deliberately. Direct Japanese-language communication eliminates the interpretation layer that causes subtle misalignments in requirements. At VAON, all client-facing engineers hold JLPT N2 or higher, which means requirement discussions can happen in Japanese without a project manager acting as intermediary.

・Week 2-4: First Technical Deliverables

The first deliverable should be a proof-of-concept or spike — not a production feature. This accomplishes several things: it forces both teams to validate the development environment end-to-end, it surfaces technical assumptions that need correction, and it gives you your first real data point on velocity and quality.

Define the acceptance criteria for this spike before the sprint starts. Review the output together. The quality of this first review session tells you more about the health of your engagement than any status report will.

・By the end of Day 30, you should have:

1. All environments provisioned and accessible

2. One completed technical spike with documented learnings

3. Communication protocols tested and adjusted based on real experience

4. A backlog of at least 6-8 weeks of prioritized work with acceptance criteria

5. A shared definition of done that both teams have agreed to in writing

🔳 Phase 2: Days 31-60 — First Delivery Cycle

This phase is where the real work begins — and where many offshore relationships show their first serious cracks. Sprint 1 and Sprint 2 are critical diagnostic periods. The code quality, the communication patterns, and the team's responsiveness to feedback in these weeks will predict the trajectory of the next 12 months.

・Sprint 1: Calibration, Not Production

Treat Sprint 1 as a calibration sprint. The objective is not to ship the maximum amount of functionality. The objective is to establish a rhythm that is sustainable, to identify gaps in the team's understanding of your domain, and to calibrate your own expectations against reality.

Common failure pattern: A Japanese CTO loads Sprint 1 with high-priority features, the offshore team ships something that partially meets the brief, the CTO is disappointed, trust begins to erode. This cycle repeats and by Sprint 4 the relationship is already in crisis.

The correct approach: Sprint 1 contains 60-70% of what you might otherwise load it with. The remaining capacity is reserved for setup tasks, documentation, and iteration on feedback. Velocity will naturally increase as the team learns your codebase and your standards.

・Feedback Loops: The Architecture of Trust

How you give feedback in the first two sprints defines the culture of the entire engagement. There are two failure modes here that are common among Japanese enterprises.

The first is under-feedback. Japanese business culture prizes indirect communication and harmony, which sometimes translates to vague feedback or approval of work that does not fully meet standards. The offshore team interprets silence or mild approval as success. Problems compound in silence.

The second is over-feedback delivered badly. Detailed critique submitted as a long email at 11pm JST, without context or priority ordering, creates anxiety and confusion rather than clarity.

The effective middle ground: Structured feedback cycles. After every sprint review, share feedback in a templated format — what was completed successfully, what needs revision (with specific acceptance criteria for the revision), and what is deferred to the next sprint. Deliver this within 48 hours of the sprint review. Assign a severity level to each issue (blocking, major, minor). Give the team the opportunity to ask clarifying questions before they begin revisions.

Quality Gates: Define Them Before You Need Them

・By Day 45, you should have explicit quality gates in place for the following:

1. Code review: who reviews, what the review checklist covers, what percentage of code requires review before merge

2. Testing: unit test coverage threshold, integration test requirements, performance benchmarks if applicable

3. Security: which OWASP categories are in scope, how vulnerabilities are tracked and resolved

4. Documentation: what must be documented at the code level, what must be documented at the feature level

If your partner uses AI-assisted development tools — Claude Code, automated test generation, AI code review — define how these outputs are validated. AI-native teams like VAON build these validation gates into the standard workflow, so that AI assistance accelerates velocity without sacrificing quality. The key question to ask your partner: "Show me how an AI-generated function gets reviewed before it merges to main."

・Sprint 2: Velocity Measurement Begins

By Sprint 2, you have enough data to begin measuring team velocity. Do not compare your Vietnam team's velocity to your Tokyo team's velocity using the same story point scale. Calibrate within the same team over time.

・Track the following metrics from Sprint 2 onward:

1. Story points completed versus committed (aim for 80%+ by Sprint 3)

2. Bug rate: defects found in QA per story point delivered

3. Rework rate: stories that return from code review or QA

4. Response time: average time from question asked to answer received

5. Blocker resolution time: average time from blocker reported to blocker resolved

These five metrics give you an early warning system. A rising rework rate in Sprint 2 signals a misalignment in acceptance criteria, not a quality problem with the team. A slow blocker resolution time signals an access or process problem on your side, not the vendor's.

🔳 Phase 3: Days 61-90 — Rhythm Establishment

If Phases 1 and 2 went reasonably well, you will feel a shift somewhere between Day 50 and Day 65. The team will start anticipating your questions. Pull requests will need fewer revision cycles. The daily check-ins will become shorter and more focused. This is the beginning of rhythm.

The objective of Phase 3 is to institutionalize that rhythm and to expand the team's scope of ownership.

・Velocity Benchmarking

By Day 90, you should have 4-5 sprints of data. Use this to establish a velocity baseline — the range of story points the team reliably delivers per sprint under normal conditions. This baseline is what you use for release planning and capacity discussions.

Do not set the baseline as the team's best sprint. Set it as the median of sprints 3-5 (discarding Sprint 1 and Sprint 2 as calibration periods). Build your roadmap around this realistic baseline, not an optimistic one.

Also benchmark quality at Day 90. What is the current defect escape rate (bugs reaching production)? What is the code review cycle time? What is the deployment frequency? These numbers tell you whether the team is improving, plateauing, or declining.

・Process Refinement

Every retrospective in Phase 3 should produce at least one concrete process change that is implemented in the following sprint. Not discussed — implemented. This discipline prevents retrospectives from becoming complaint sessions and keeps the team in a continuous improvement posture.

・Common refinements teams discover in this phase:

1. The daily check-in should move 30 minutes earlier to better capture the overlap window

2. Acceptance criteria for a particular domain (e.g., payment flows, authentication) need a more detailed template

3. A specific type of bug is recurring, pointing to a gap in the development checklist

4. A team member has domain expertise that is not being fully utilized

・Expanding Ownership

By Day 90, the offshore team should own at least one domain or module independently — meaning they can receive a requirement, estimate it, build it, test it, and deliver it without the Japanese side micromanaging each step. If this has not happened by Day 90, it is a sign that either the requirements process is too vague or the team's technical confidence in the domain is insufficient and needs targeted development.

・VAON's Onboarding Methodology

Having launched offshore engagements across multiple Japanese enterprise clients, VAON has developed a structured onboarding methodology built on three principles.

First: Documentation-first culture. Every decision, every architectural choice, every deviation from the original plan is documented in a shared system before the next sprint begins. This creates a shared memory that new team members can onboard from and that the Japanese side can audit at any time.

Second: Over-communicate in Phase 1, normalize in Phase 3. We deliberately increase the communication frequency in the first month — more check-ins, more written summaries, more explicit confirmations. This feels redundant. It is not. It surfaces misalignments before they become problems. By Phase 3, communication naturally becomes leaner because the shared context is deep.

Third: Client-side ambassador. For every engagement, we designate one VAON engineer as the client-side ambassador — the person whose primary responsibility is to understand the client's business context, not just the technical requirements. This person attends business-side discussions that engineers typically would not join. The result is a team that builds with business judgment, not just technical correctness.

・Common Failure Patterns

1. Failure Pattern 1: Skipping the Foundation Phase

The pressure to show output in Week 1 is understandable. It is also almost always a mistake. Teams that skip or compress the foundation phase spend months recovering from the technical debt and misalignment it creates.

2. Failure Pattern 2: Treating Offshore as Remote Employees

An offshore team is not a distributed internal team. They have a different organizational culture, different management context, and different visibility into your company's priorities. Managing them as if they were remote Tokyo employees — with the same level of implicit context — will create constant confusion. Be more explicit than you think you need to be.

3. Failure Pattern 3: Letting Blockers Age

Any blocker that is not resolved within 48 hours should be escalated to the project manager on both sides. Blockers that age for a week or more create idle time, frustration, and the perception that the Japanese side is not committed to the engagement. Move fast on blockers.

4. Failure Pattern 4: Changing Requirements Without Process

Mid-sprint requirement changes are the single largest predictor of offshore relationship degradation. Every change request, however small, should go through a defined process: documented in the backlog, reviewed in the next sprint planning, estimated and prioritized. No verbal requirement changes. No "just add this to the current sprint" requests.

5. Failure Pattern 5: Measuring Output Instead of Outcomes

Story points delivered is a proxy metric, not a business metric. By Day 90, connect the team's output to a business outcome — user acquisition, transaction success rate, deployment frequency, error rate reduction. Teams that understand why they are building something build better than teams that are just executing tickets.

・KPIs for Each Phase

Phase 1 KPIs (Days 1-30):

1. Environment provisioning complete by Day 10

2. Kickoff documentation distributed within 48 hours of kickoff meeting

3. Technical spike delivered and reviewed by Day 25

4. Backlog populated to 6-week depth by Day 30

5. Communication protocol document signed off by both sides by Day 7

Phase 2 KPIs (Days 31-60):

1. Sprint velocity: 70%+ completion rate by Sprint 2

2. Bug rate: fewer than 1.5 defects per story point in Sprint 2

3. Feedback delivery time: within 48 hours of sprint review

4. Blocker resolution time: under 48 hours average

5. Definition of done compliance: 100% of merged PRs meet checklist

Phase 3 KPIs (Days 61-90):

1. Velocity baseline established from Sprints 3-5

2. Defect escape rate: 0 critical bugs in production in final 30 days

3. Team ownership: at least one domain owned end-to-end by offshore team

4. Retrospective output: at least one implemented process change per sprint

5. Client satisfaction: informal check-in with business stakeholder confirms team is meeting expectations

Closing Thoughts

The first 90 days are not about proving the offshore model works. They are about building the specific working relationship between your organization and your partner that makes everything that comes after possible. The teams that invest in this foundation — that resist the temptation to shortcut it — typically reach full productivity by Month 4 and are delivering high-quality output autonomously by Month 6.

The teams that skip the foundation are still renegotiating the basics of their working relationship 12 months later.

If you are evaluating a Vietnam development partner and they cannot articulate their own onboarding methodology in detail — including how they handle blockers, how they structure the first sprint, and how they establish communication protocols — that is a signal worth taking seriously.

A partner worth working with has thought carefully about this phase. Because they know it is the one that determines whether everything else succeeds.

About VAON: VAON Việt Nam is an AI-native software development company based in Ha Noi City, serving Japanese enterprise clients. Our team holds JLPT N1/N2 certifications, enabling direct Japanese-language technical collaboration. Learn more at vaon.com.vn/en or contact us for a consultation on your offshore engagement plan.