In the digital era, “AI integration” is no longer a luxury feature—it has become a mandatory standard to stay competitive. From automating customer support with chatbots, analyzing big data, to personalizing user experiences, AI is permeating every corner of modern technology products.

However, there is a harsh reality: many businesses eagerly integrate AI into their existing systems, only to find that their entire platform quickly crashes or becomes unbearably slow.

Why does this happen? And how can you design a truly scalable, AI-ready system? Let’s explore in the article below.

Why do traditional architectures often “break” when integrating AI?

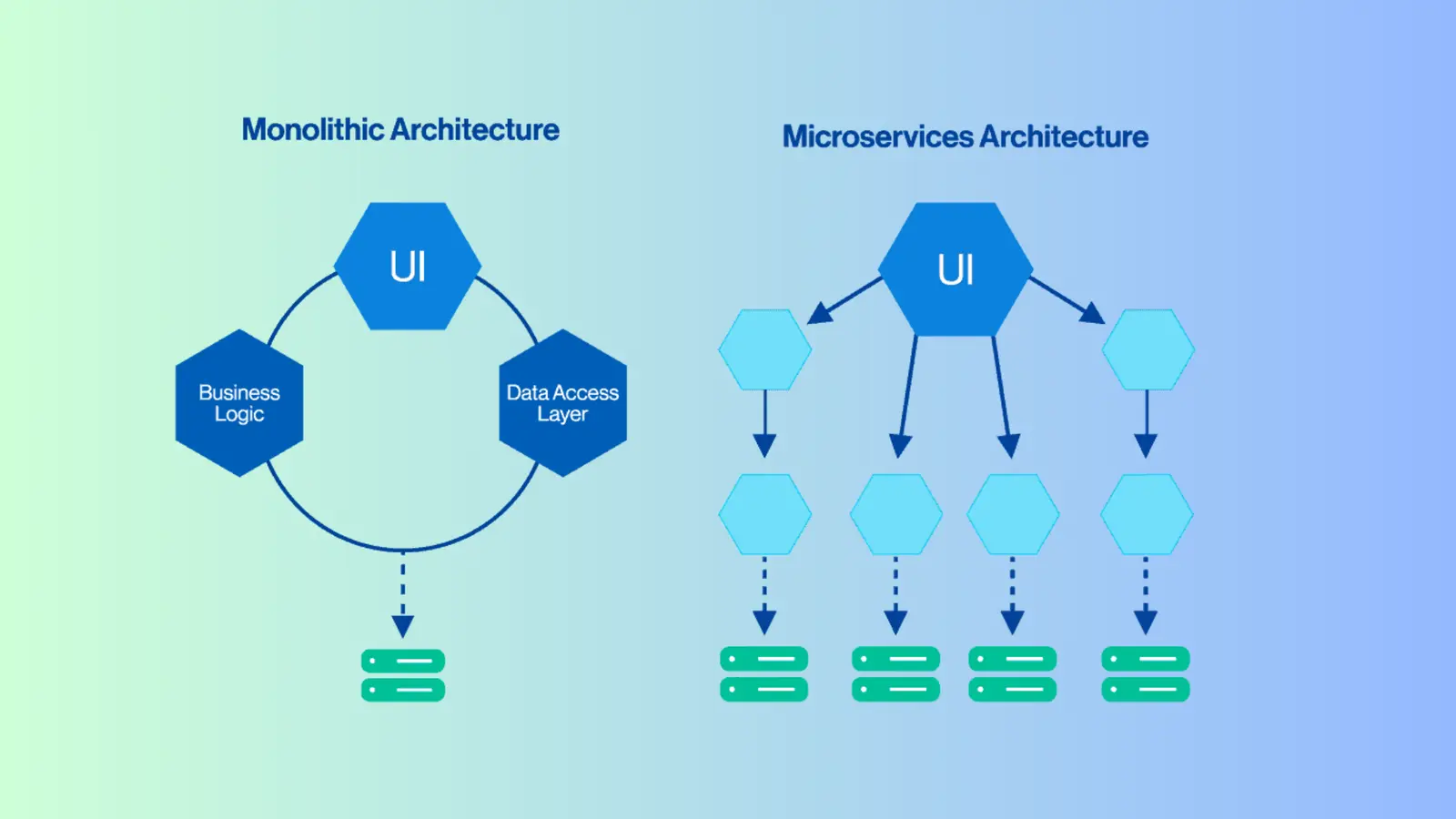

Most traditional software systems—especially monolithic architectures—are designed for deterministic tasks and low-latency responses. For example, a database query to retrieve user information typically takes around 50ms.

However, when you embed AI models (especially Generative AI or heavy Machine Learning workloads) into this architecture, three critical weaknesses emerge:

1. Latency Bottleneck

AI APIs often take several seconds to tens of seconds to process (e.g., waiting for an LLM to generate text). If the system uses synchronous communication, web server threads are blocked while waiting for AI responses. Even a small number of concurrent AI requests can exhaust the thread pool, causing the entire system to crash.

2. Asymmetric Resource Consumption

Core business functions (like login or shopping carts) consume minimal CPU/RAM, while AI processing (e.g., vector databases or model inference) requires massive resources. In traditional architectures, you cannot allocate resources independently for the AI layer, leading to either wasted capacity or localized overload.

3. Rigid Data Pipelines

AI thrives on data. However, traditional systems often lock data within relational databases (SQL) and lack real-time, event-driven data pipelines. This prevents AI from accessing continuous data streams needed for learning and accurate predictions.

Modular & Microservices: The “Antibodies” of AI-Ready Systems

To solve scalability challenges, you can’t simply inject AI API calls into your existing business logic. The optimal approach is to adopt a modular or microservices architecture, completely separating the “AI brain” from the “operational body.”

Here are three key principles for designing highly scalable systems:

1. Asynchronous Communication

Instead of making the web server wait for AI responses, introduce a message queue (e.g., Kafka, RabbitMQ). When a user sends a request, the system pushes it to the queue and immediately returns a “Processing” response. AI worker nodes then asynchronously consume tasks from the queue, process them, and send results back via Webhook or WebSocket. This ensures the core system is never blocked.

2. Independent Auto-scaling

With a microservices architecture, you can scale the AI layer independently. Under normal conditions, the system might run only two AI nodes. But during traffic spikes (e.g., marketing campaigns), it can automatically scale up to 20 AI nodes without affecting other services like order management.

3. Flexible Pluggability

Design your system like a plug-and-play architecture. The core system defines clear interfaces, and any AI service that adheres to the expected data format (JSON/gRPC) can be easily plugged in or replaced—without disrupting the entire system.

Practical Case Study: Integrating an AI Module (OneBot) into an Existing System

To make it easier to visualize, imagine you want to integrate OneBot (an AI module specialized in natural language processing) into a legacy CRM system. If built with an “AI-Ready” approach, the process becomes extremely smooth:

- No core changes, only add an API Gateway: There’s no need to rebuild the CRM. Simply configure an API Gateway to route customer messages to the OneBot system.

- Event-Driven Communication: Instead of the CRM continuously polling OneBot for results, OneBot operates as an independent module. Once processing is complete, it automatically triggers a webhook to send structured data back to the CRM, which then creates a ticket.

- Easy Algorithm Upgrades: Because OneBot is integrated via a modular approach, upgrading the core AI model (e.g., from GPT-3.5 to GPT-4) requires zero changes to your CRM codebase.

Key takeaway: Don’t build a “closed” system—build one that is “AI-ready.”

The future of technology doesn’t depend on which cutting-edge AI algorithm you’re using today, but on whether your software architecture is flexible enough to absorb the breakthroughs of tomorrow. Optimizing scalability through microservices, modular design, and asynchronous communication is the essential foundation for sustainable growth in the age of AI.

Are you facing challenges in designing system architecture for AI integration? Contact us to get expert consultation on System Development Best Practices tailored to your business!